Updated: June 12, 2023

The Director of Customer Experience was proud of her company’s new customer service survey. She had been a strong advocate for collecting voice of customer feedback and now they were finally getting it.

"That's great!" I said. "What are you doing with the data?"

There was an awkward silence. Finally, she replied, "Uh, we report the numbers in our regular executive meeting."

That was it. The survey was doing nothing to generate insights that could help improve customer experience, increase customer loyalty, or prevent customer churn.

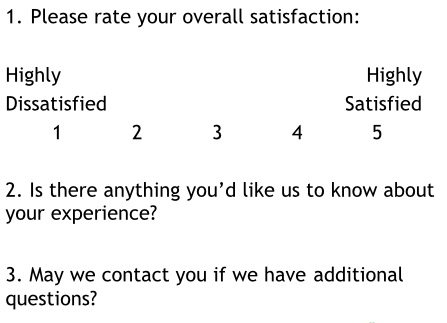

One problem was the survey had no comment section. Customers could rate their experience, but there was no place for them to explain why they gave that rating.

Comments are a critical element that tell you what your customers are thinking and what you need to do to improve. But having a comment section isn't enough.

You need to know how to analyze those comments. This guide can help you.

Why Survey Comments Matter

Comments provide context behind numerical ratings. They help explain what makes customers happy, what frustrates them, and what you can do better.

Let’s look at the Google reviews for a Discount Tire Store in San Diego. As of June 12, 2023, the store has a 4.5 star rating on Google from 482 reviews.

That's great news, but two big questions remain if you’re the store manager:

How did your store earn that rating? (You want to sustain it!)

What's preventing even better results? (You want to improve.)

The rating alone doesn't tell you very much. You need to look at the comments people write when they give those ratings to learn more.

The challenge is the comments are freeform. You'll need a way to quickly spot trends.

Analyze Survey Comments for Trends

The good news is you can do this by hand. It took me less than 30 minutes to do the analysis I'm going to show you.

Start with a check sheet. You can do this on a piece of paper or open a new document and create a table like the one below. Create a separate column for each possible rating on the survey.

Next, read each survey comment and try to spot any themes that stand out as the reason the customer gave that rating. Record those themes on your check sheet in the column that matches the star rating for that review.

For example, what themes do you see in this five star review?

I recorded the following themes on my check sheet:

Now repeat this for all of the reviews. (If you have a lot of reviews, you can stick to a specific time frame, such as the past three months.) Look for similar words or phrases that mean the same thing and put a check or star next to each theme that's repeated.

Once you've completed all of the reviews, tally up the themes that received the most mentions. Here are the top reasons people give a 5 star rating for this Discount Tire store:

Fast service: 72%

Good prices: 35%

Friendly employees: 23%

There weren't many bad reviews. The few that had comments mentioned a long wait time, a lack of trustworthiness, or some damage done to the customer's vehicle.

You'll see a larger theme emerge if you look across all the reviews.

Some aggravation usually accompanies a trip to the tire store. Maybe you got a flat tire. Perhaps you're trying to squeeze in car service before work. There's a good chance you're dreading the cost.

When Discount Tire customers are happy, their comments tend describe some sort of relief. For instance, more than one customer mentioned arriving just before closing and feeling relieved to get great service from helpful and friendly employees.

Take Action!

The purpose of this exercise is to take action!

If I managed that Discount Tire store, I'd make sure employees understood they are in the relief business. (Perhaps they do, since their rating is so high!) Relief is one of the top emotions in customer support.

I'd also respond to negative reviews, like this one:

For private surveys, you'll need a non-anonymous survey or a contact opt-in feature to do this.

Many public rating platforms like Google My Business, Yelp, and TripAdvisor allow you to respond publicly to customer reviews. A polite and helpful response can signal other customers that you care about service quality.

And you might save that customer, too. One Discount Tire customer changed his 1 star review to a 5 star review after speaking with the manager who apologized and fixed the issue!

You can watch me do another check sheet in this short video on LinkedIn Learning: