Contact centers struggle to use customer service survey data.

That's the conclusion suggested by a new report from ICMI called Collapse of the Cost Center: Driving Contact Center Profitability. The report, sponsored by Zendesk, focuses on ways that contact centers can add value to their organizations.

Collecting customer feedback is one way contact centers can add value. This feedback can be used to retain customers, improve customer satisfaction, identify product defects, and increase sales.

So, what's the struggle? Here's a statistic that immediately caught my attention:

63% of contact centers do not have a formal voice of the customer program.

Yikes! It's hard to use your contact center as a strategic listening post if you aren't listening.

Let's take a look at some of the report's findings along with some solutions.

Key Survey Stats

Here are some selected statistics from the report.

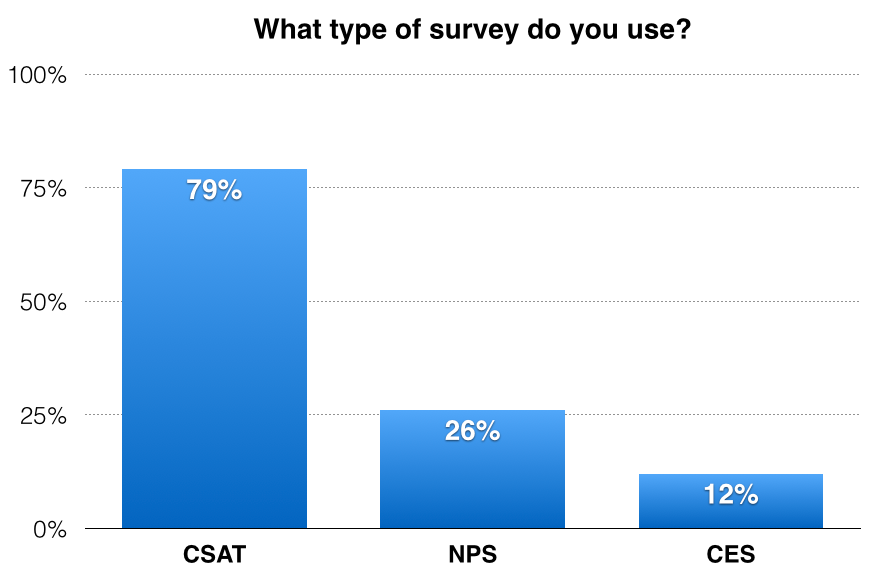

First, let's look at the types of surveys used by contact centers that do have a formal voice of the customer (VOC) program:

Source: ICMI

Customer Effort Score (CES) presents an untapped opportunity.

CES measures customers' perceived effort (see this overview). A good CES program will help companies identify things that annoy customers and create waste. This makes it a great metric for improving efficiency.

Why is efficiency so important in a customer-focused world? Here's another statistic from the ICMI report that explains it:

62% of organizations view their contact center as a cost center.

That means efficiency is one of the most important success indicators for those companies' executives. CES marries cost control and service quality by measuring efficiency from the customer's point of view.

Another revealing statistic shows what's not measured:

44% of contact centers don't measure customer retention

Keeping customers should be the name of the game for contact centers. If you don't measure this statistic, than customer retention can't be a priority.

Challenges With Surveys

The report highlighted challenges contact centers face with survey data. Here are the top five:

Challenge #1: Using survey data to improve service. Survey data is more than just a score. The key is analyzing the data to get actionable insight. That's a skill that many customer service leaders don't have. One resource is this step-by-step guide to analyzing survey data.

Challenge #2: Getting a decent response rate. Response rate is a misleading statistic. There are two things that are far more important. First, does your survey fairly represent your customer base? Second, is your survey yielding actionable data? Your response rate is irrelevant if you can confidently say "Yes" to these questions.

Challenge #3: Analyzing data. See challenge #1. You can't improve service if you don't analyze your data to determine what needs to be improved.

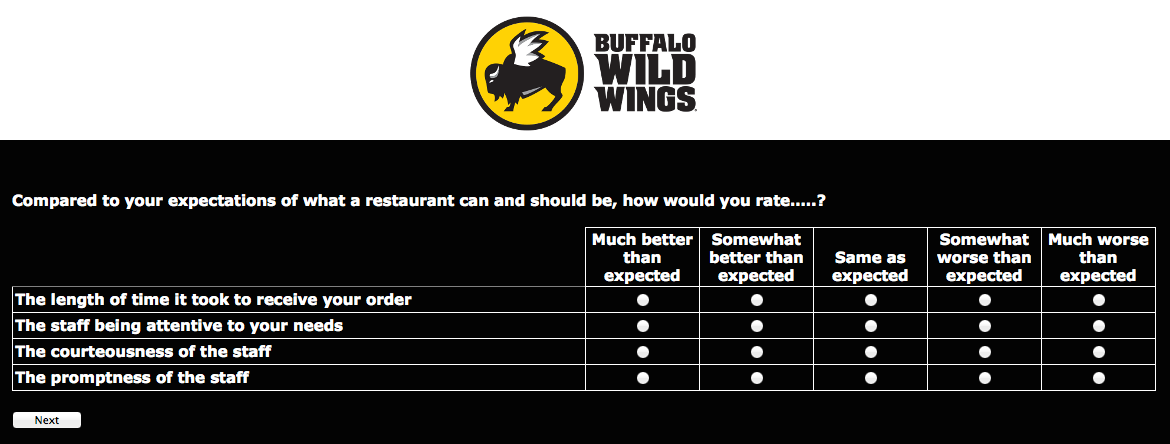

Challenge #4: Designing effective surveys. Survey design is another skill that many customer service leaders don't have. Here's a training video on lynda.com that provides everything you need to get started. You'll need a lynda.com account to take the full course, but you can get a 10-day trial here.

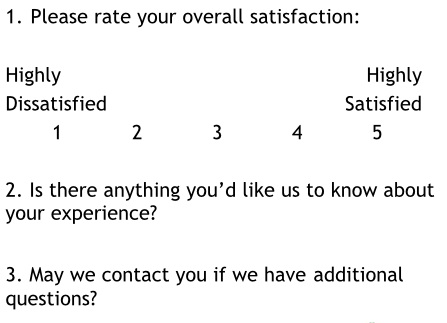

Challenge #5: Taking action to help dissatisfied customers. You'll need a closed loop survey to tackle this challenge. A closed loop survey allows customers to opt in for a follow-up contact. Once you add this, it becomes very easy to initiate a program to follow-up with upset customers.

Additional Resources

The full report provides a lot more data and advice on leveraging contact centers to improve customer service and profits. It's available for purchase on the ICMI website.

Here are some additional blog posts that can also help:

- Anatomy of a Lousy Survey

- 5 Signs Your Customer Service Survey is Missing the Point

- Why You Should Stop Trying to Improve Your Survey Scores